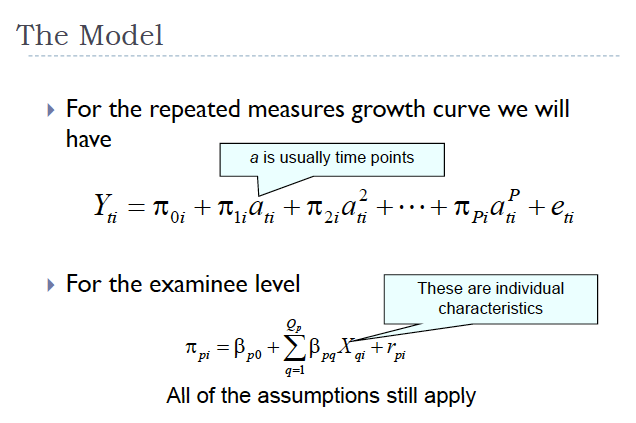

I find it disconcerting that Cuomo (and the feds) are punishing the New York City Schools by withholding $400 million because they refuse to adopt “value-added” modeling that some consider as “frauds” and “junk science.” Growth models were also an issue in the recent Chicago teacher strike. Who should we listen to on value-added modeling? The politicians or the experts? It’s a sexy catch phrase “value-added,” but politicians typically don’t have a clue how they work— even as they espouse their virtues. Want to have a little fun? Ask your local mayor, governor, Arne Duncan… etc. to specify a growth model. (See a model specification that I borrowed in the featured image).

Fortunate for us, there are experts qualified to advise politicians (if they will listen— they haven’t yet) on how growth models actually function when measuring classroom achievement relative to teachers and their serious limitations. The online peer-reviewed journal Education Policy Analysis Archives (EPAA) has dealt with growth models extensively. One of my favorites is Value-added modeling of teacher effectiveness: an exploration of stability across models and contexts by Xiaoxia A. Newton, Linda Darling-Hammond, Edward Haertel, Ewart Thomas. They found:

Judgments of teacher effectiveness for a given teacher can vary substantially across statistical models, classes taught, and years. Furthermore, student characteristics can impact teacher rankings, sometimes dramatically, even when such characteristics have been previously controlled statistically in the value-added model. A teacher who teaches less advantaged students in a given course or year typically receives lower effectiveness ratings than the same teacher teaching more advantaged students in a different course or year. Models that fail to take student demographics into account further disadvantage teachers serving large numbers of low-income, limited English proficient, or lower-tracked students. We examine a number of potential reasons for these findings, and we conclude that caution should be exercised in using student achievement gains andvalue-added methods to assess teachers’ effectiveness, especially when the stakes are high.

Also fortunate for us, EPAA has just published several articles and two video commentaries as part of the Special Issue on Value-Added: What America’s Policymakers Need to Know and Understand. The online issue was guest edited by Dr. Audrey Amrein-Beardsley, Dr. Clarin Collins, Dr. Sarah Polasky, and Ed Sloat. Here is the most recent expert work on value-added modeling:

Baker, B.D., Oluwole, J., Green, P.C. III (2013) The legal consequences of mandating high stakes decisions based on low quality information: Teacher evaluation in the race-to-the-top era. Education Policy Analysis Archives, 21(5). Retrieved from http://epaa.asu.edu/ojs/article/view/1298

Pullin, D. (2013). Legal issues in the use of student test scores and value-added models (VAM) to determine educational quality. Education Policy Analysis Archives, 21(6). Retrieved from http://epaa.asu.edu/ojs/article/view/1160.

Kersting, N. B., Chen, M., & Stigler, J. W. (2013). Value-added Teacher Estimates as Part of Teacher Evaluations: Exploring the Effects of Data and Model Specifications on the Stability of Teacher Value-added scores. Education Policy Analysis Archives, 21(7). Retrieved from http://epaa.asu.edu/ojs/article/view/1167

Graue, M.E., Delaney, K.K., & Karch, A.S. (2013). Ecologies of education quality. Education Policy Analysis Archives, vol. 21(8). Retrieved from http://epaa.asu.edu/ojs/article/view/1163

Gabriel, R. & Lester, J. N. (2013). Sentinels guarding the grail: Value-added measurement and the quest for education reform. Education Policy Analysis Archives, vol. 21 (9). Retrieved from http://epaa.asu.edu/ojs/article/view/1165

Adler, M. (2013). Findings vs. Interpretation in “The Long-Term Impacts of Teachers by Chetty et al.” Education Policy Analysis Archives, vol. 21 (10). Retrieved from http://epaa.asu.edu/ojs/article/view/1264

The experts have spoken. Will politicians listen?

Leave a comment