Did you think I was talking about someone in Donald Trump’s cabinet? Actually, I am not blogging about education reform today, but instead taking up an important topic that gets very little attention— student evaluation of professors.

Two weeks ago I attend the AAERI conference in Ft. Myers Florida. The primary reason I went was because Sacramento State encourages faculty in the US Department of Education Pathway Fellows grant program to attend conferences with their advisees.

https://www.instagram.com/p/Bp4VE-iBIWv/

Jimmy Pedra Ojeda, my Pathway Fellow mentee, and I presented our recent paper entitled Examining the myth of accountability, high-stakes testing and the achievement gap. Jimmy did a great job on the presentation! I was proud of him. Prior to the meeting, Dr. Sherwood Thompson Executive Director & CEO of AAER.org contacted me via email and asked if I would also be willing to participate in the the conference’s “Great Debate” during a luncheon. Here is the description he provided.

The trend in higher education nowadays, especially in public supported universities, is to rely heavily on student evaluations and ratings on faculty effectiveness. Relying on students ratings is also a practice in post-tenure review. Should we believe student ratings reflect a faculty member’s ability to teach effectively? Are students qualified to rate teaching effectiveness and performance? What if a faculty ‘s coursework is rigorous, does that change the outcome of the evaluation results?

The primary motion under debate was: Student evaluations and ratings are a reliable indicator of faculty teaching performance. I was asked to argue against the motion. The format was a Cambridge Style format (Also check out our Intelligence Squared debate about charters schools that was nationally syndicated on PBS here). My opponent in the debate was Dr. Dina Schwam, Assistant Professor of Psychology and Human Services at Pennfield College.

Here are my opening remarks from the debate.

First of all, I should say that student evaluations do matter a great deal. They matter, particularly, for junior faculty, graduate students, lecturers, and other untenured faculty.

Clearly, at most institutions, these evaluations are given serious consideration when departments hire and grant tenure to faculty. As, a result we need to take a careful look at the research.

So what does the predominance of the research say about students being good judges of their own learning course content?

Carrell and West (2010) found students aren’t the best judges of whether or not they’ve learned course content. Furthermore, students, by definition, may not be the best judge of the quality of instruction, because they are not necessarily experts yet in the field they are being taught.

In fact, White and Wong Gonzales (2016) found that that student learning and students’ evaluations of faculty are not related and that large sample sized studies showed no or only minimal correlation between student evaluation ratings and learning. Which suggests that a student may have learned a lot of the course material, but still rate an instructor in a negative way. Conversely, White and Wong Gonzales found that students evaluate instructors positively in part because the student wasn’t challenged enough in class.

In essence, students are not typically evaluating content and pedagogy but rather the soft skills of the instructor— how comfortable— and how cared for they feel. As a result, evaluations more often measure how much students like the instructor, not necessarily how much students learn.

What does the research say about bias as a confounding factor?

Basow and Silberg (1987) found that male and female students gave female professors significantly poorer ratings than they gave male professors, even when controlling for course division, years of teaching, and tenure status.

Riniolo et al. (2010), discovered that professors perceived as attractive received student evaluations about 0.8 of a point higher on a 5-point scale compared to professor not seen as attractive.

Wilson, Beyer, and Monteiro (2014) found that when students evaluate professor who were 35 years or younger and those who were 36 years or older, evaluations suffered more based on aging for female than male professors

Because these evaluations shape how faculty are hired, promoted, and given raises, these social biases should be deeply troubling.

Does the research literature generate validity of student evaluations?

In general, the predominance of research literature suggest they we currently have no accurate measures of student growth in most courses, therefore student evaluations have no objective scale against which we can validate them. In a review of the literature on student evaluations of teaching, Stark and Freishtat (2014) concluded that,

“The common practice of relying on averages of student teaching evaluation scores as the primary measure of teaching effectiveness for promotion and tenure decisions should be abandoned for substantive and statistical reasons: There is strong evidence that student responses to questions of ‘effectiveness’ do not measure teaching effectiveness.”

Another threat to the validity of students’ evaluation and rating is that the research literature has demonstrated that different type of courses have been found to influence ratings. For example, Pallet (2006) found that required classes tend to score lower than the electives.

My opponent in this debate cited Gravestop. Gravestop and Gregor-Greenleaf (2008), who actually recognized the problems with reliability and validity. In fact, they stated,

“Educational scholars have examined issues of bias, have identified concerns regarding their statistical reliability and have questioned their ability to accurately gauge the teaching effectiveness of faculty.”

Conclusion

As the director of thirteen educational leadership and policy doctoral faculty at California State University Sacramento, I have found that student evaluations as concurrently constructed can be a red flag when they are consistently really low. However, otherwise, considering all the evidence, their usefulness is often very limited.

In fact, students don’t have much incentive to provide thoughtful feedback at the end of the term, when it’s too late for a professor to make any adjustments that will benefit them.

For decades, student teaching evaluations have been collected by academic institutions to be factored into institutional decisions. Considering the research, these instruments do not always legitimately assess teaching competence. Hornstein (2016) argued that at best the instruments speak about student opinions about faculty teaching capability, and student opinions and satisfaction are not necesarily legitimate measures of the actual teaching capability of faculty as currently constructed. In essence, they don’t measure what they say they measure.

How many of you have received a set of student evaluations that have puzzled you? How many of you have considered the validity of those evaluations might be a little bit suspect? These are some actually student evaluation comments gathered from across the internet…

“This course kept me out of trouble from 2-4:30 on Tuesdays and Thursdays.”

“TA steadily improved throughout the course. I think he started drinking and it really loosened him up.”

“Information was presented like a ruptured fire hose. Spraying in all directions, no way to stop it.”

“She looks like a fried Barbie doll and acts like one too”

“I hear dude was in the Israeli army; I wouldn’t be surprised if he’d killed someone with his own hands”:

“I guess he is hard to understand if you’re a backwoods redneck who only speaks ‘merican”

These are just a few real life evaluations that should give us pause considering the high-stakes nature of student evaluations because they often determine the careers of junior faculty, graduate students, lecturers. In my own department, faculty policy does not allow reappointment to doctoral faculty status unless an instructor averages 4.0 out 5 over a three year period. That’s high stakes.

What are some other alternatives to the current system of student evaluation:

- Expert faculty evaluations

- Students provide feedback in retrospective

- Rotating expert student evaluators

As a result, considering the literature has extensively demonstrated bias, lack of validity, the limited ability of students to quickly provide expert feedback and other confounding factors— it is very clear that students’ evaluation and ratings are not currently a reliable indicator of faculty teaching performance.

Do you agree or disagree that student evaluations and ratings are not a consistent and reliable indicator of faculty teaching performance? Honestly, I don’t believe that student feedback should be ignored. But clearly we need to rethink our evaluation process to provide more validity in the results.

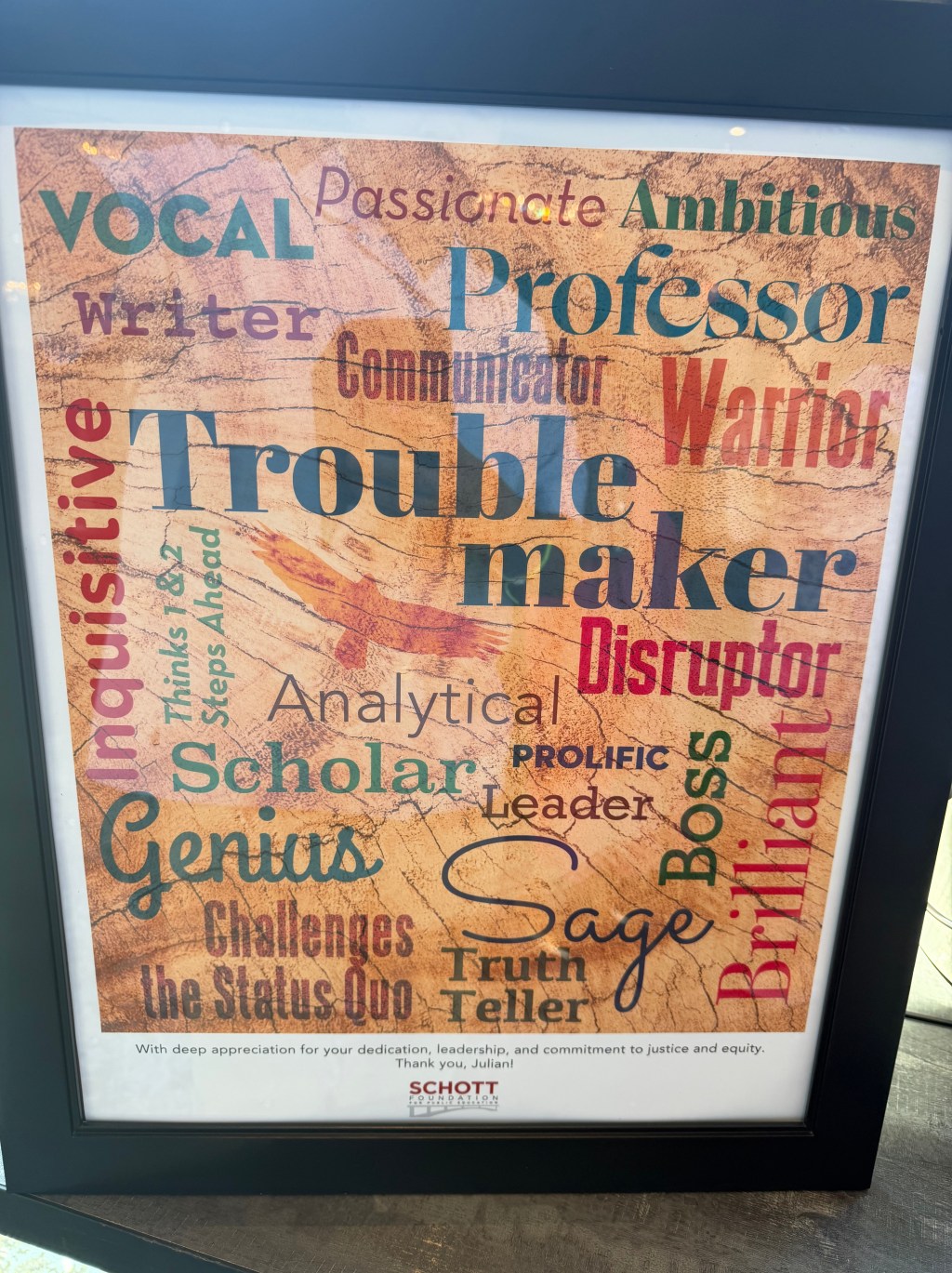

https://www.instagram.com/p/Bp45x5cBwe4/

For more conversation in the media click here.

Please Facebook Like, Tweet, etc below and/or reblog to share this discussion with others.

Check out and follow my YouTube channel here.

Twitter: @ProfessorJVH

Click here for Vitae.

Leave a comment